scad x ea sprint

BREAKDOWN

problem

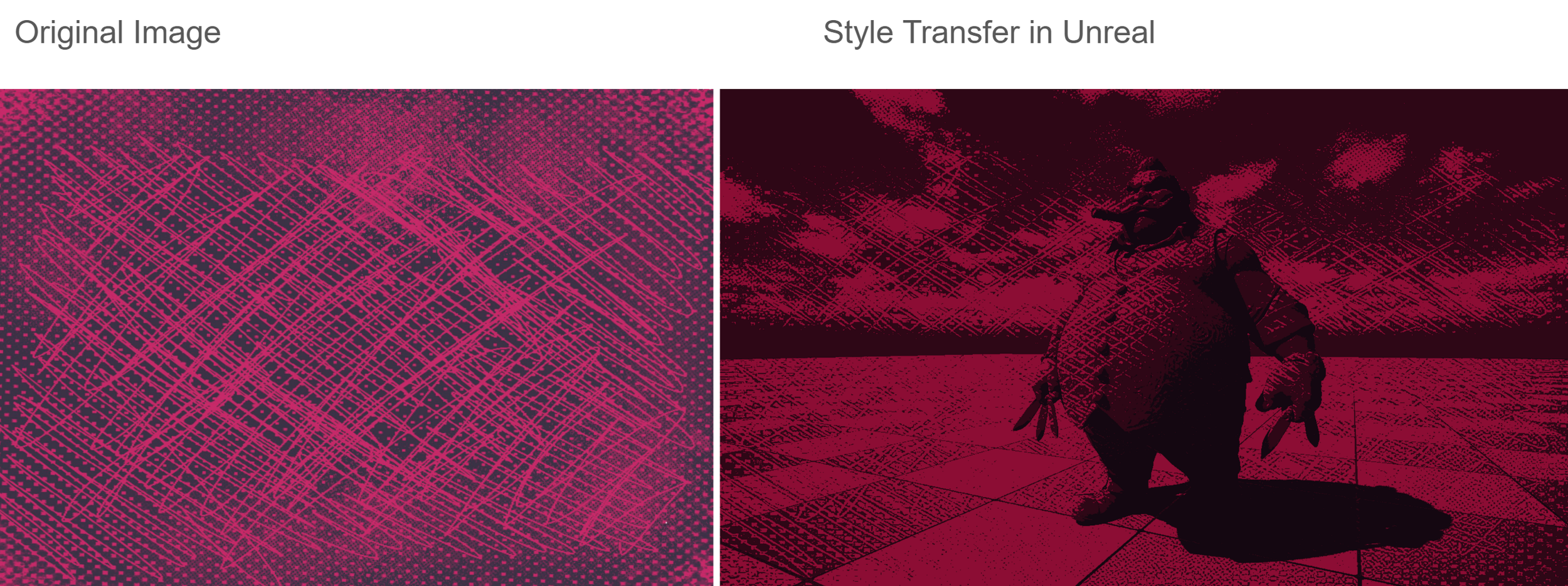

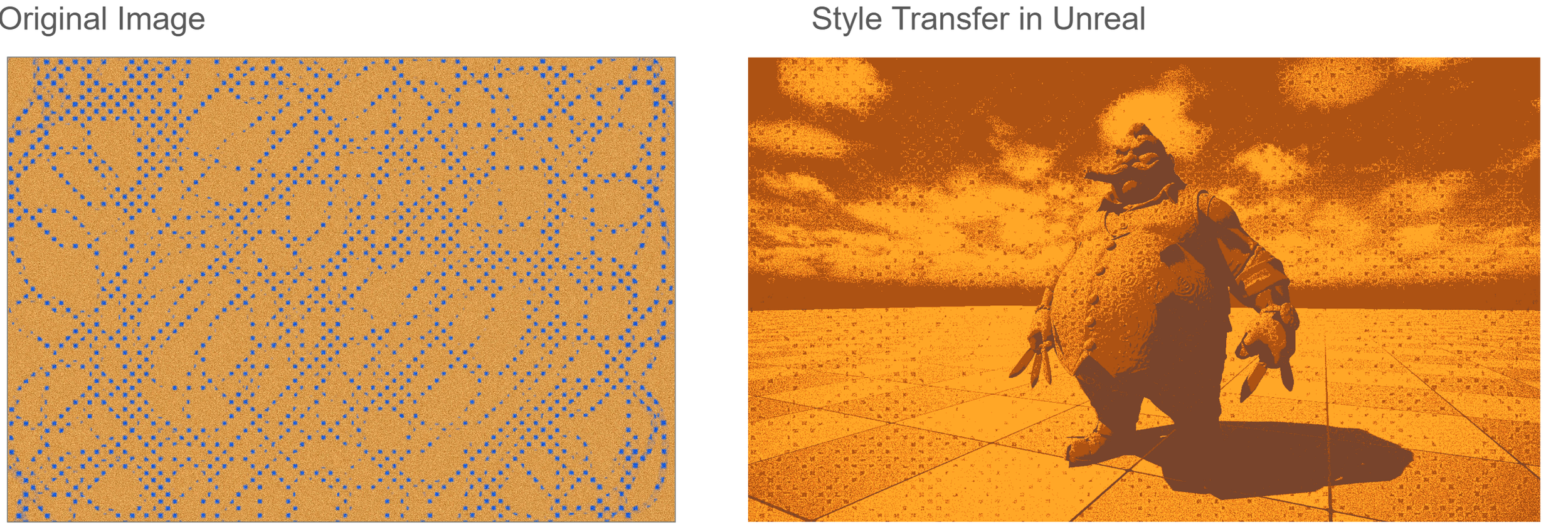

Training every artist to match a single visual style was slow, inconsistent, and difficult to scale across projects.

solution

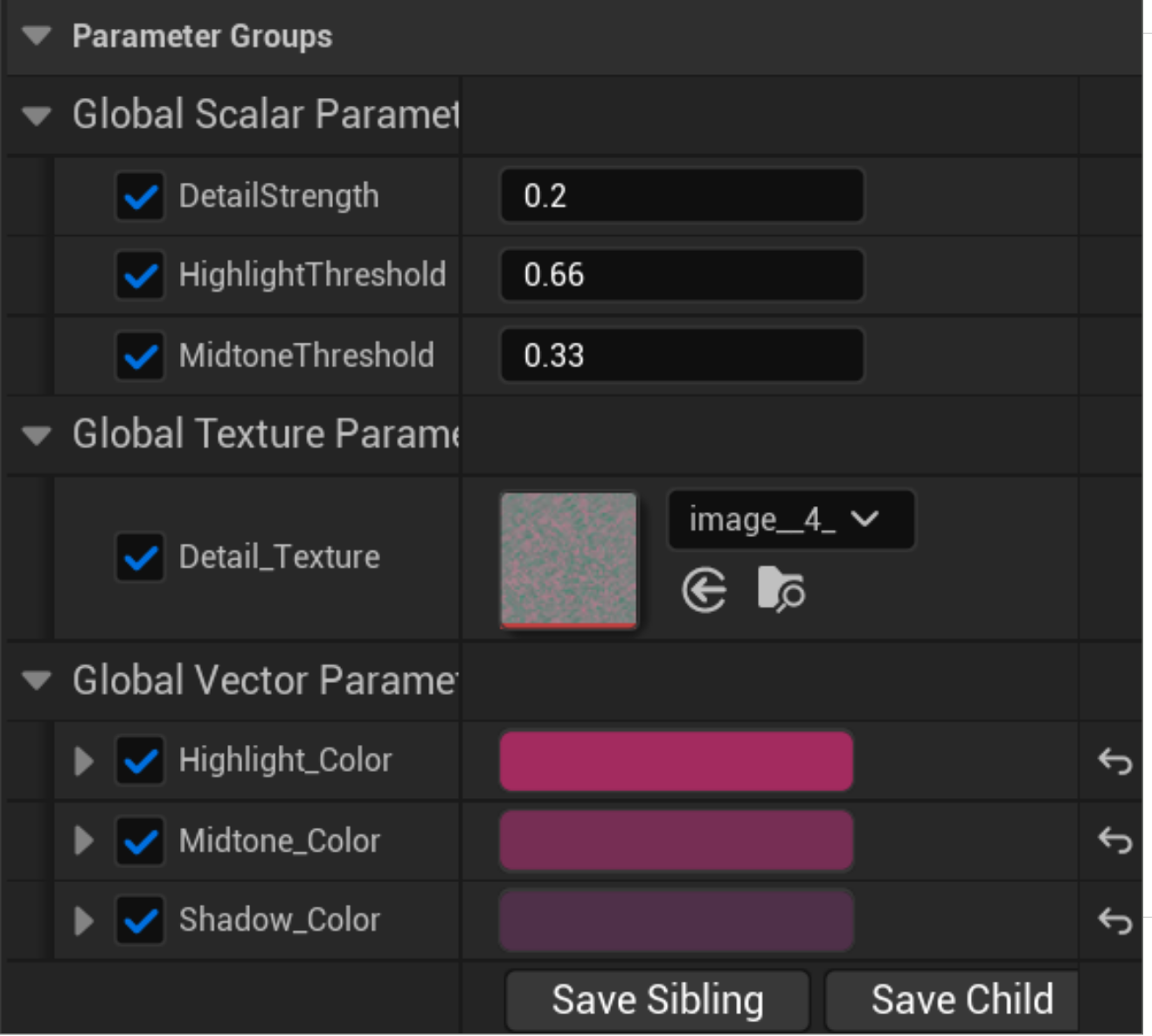

You modified an AI model to analyze a single reference image and automatically extract tonal values (highlights, midtones, shadows). Those values are then fed into a custom Python pipeline that procedurally builds an Unreal Engine post-process cel shader, ensuring the entire render output adopts the target style consistently without requiring manual artist retraining.

Image Analysis with K-Means

This is where K-Means replaces simple averaging:

Old approach: take all shadow pixels, average their RGB → one color per zone

New approach:

Pixels get sorted into tonal zones (shadows, midtones, highlights) by luminance thresholds — either fixed or dynamic via percentile

Within each zone,

KMeans(n_clusters=3)groups pixels into dominant color clustersClusters are sorted by size (most dominant first) and normalized to weights summing to 1.0

The result is 3 colors per zone instead of 1, each with a proportional weight

This is because a shadow zone might contain both cold blue-blacks and warm dark-browns — averaging would muddy both into grey-brown. K-Means keeps them distinct.

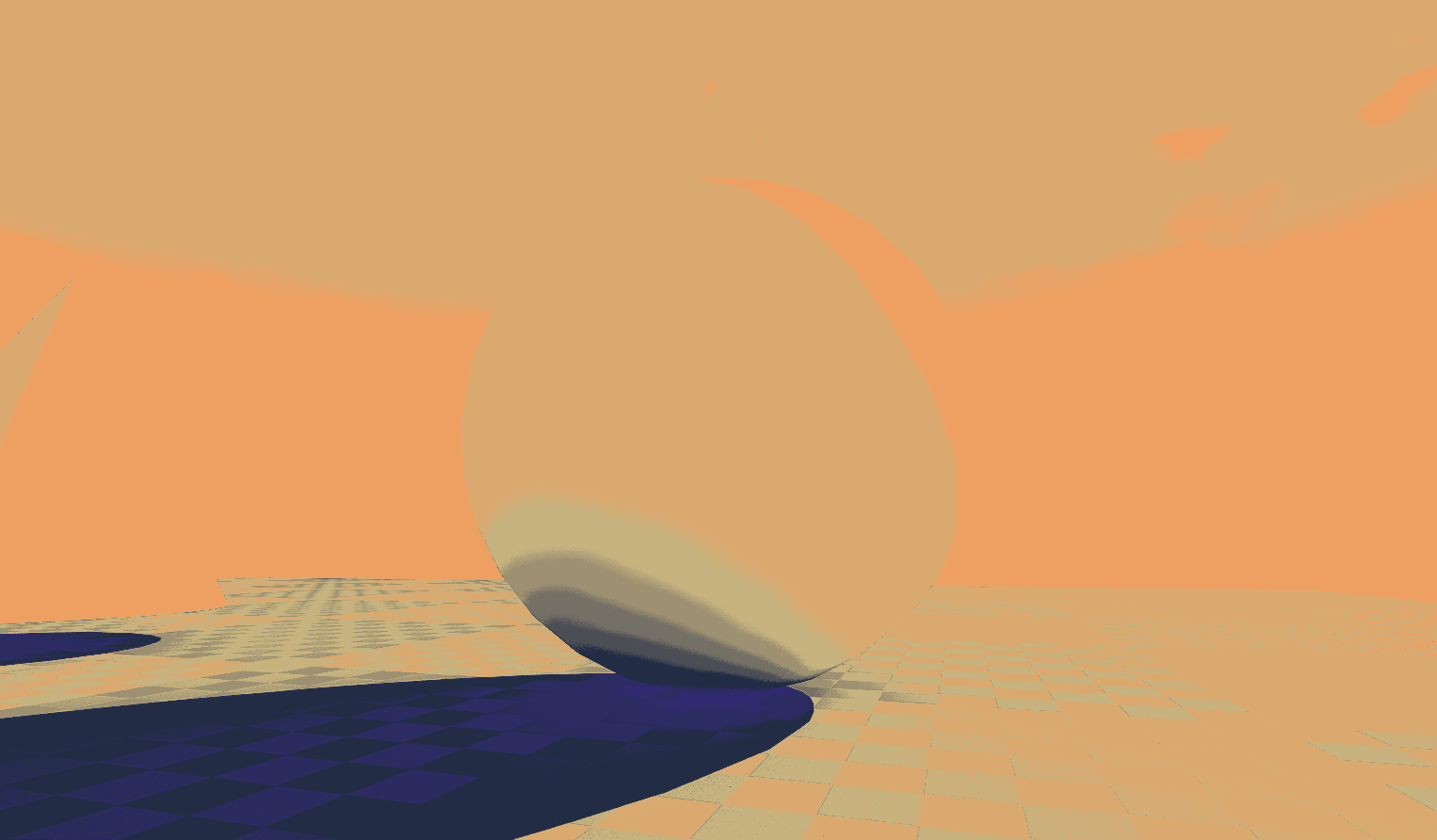

5-Band Material Generation

The analyzed colors get mapped into a 5-band tonal system (upgraded from 3):

BandCoverageC0 —

Deep Shadowbelow

T0C1 — Dark MidT0

T1C2 — MidtoneT1

T2C3 — Light MidT2

T3C4 — Highlightabove T3

These use smooth step masks (not hard cuts) with a Hardness parameter for softness control. Each band multiplies its color against its mask, and all five are added together into the emissive output — giving you a full color grade in Unreal built directly from your reference image's palette.

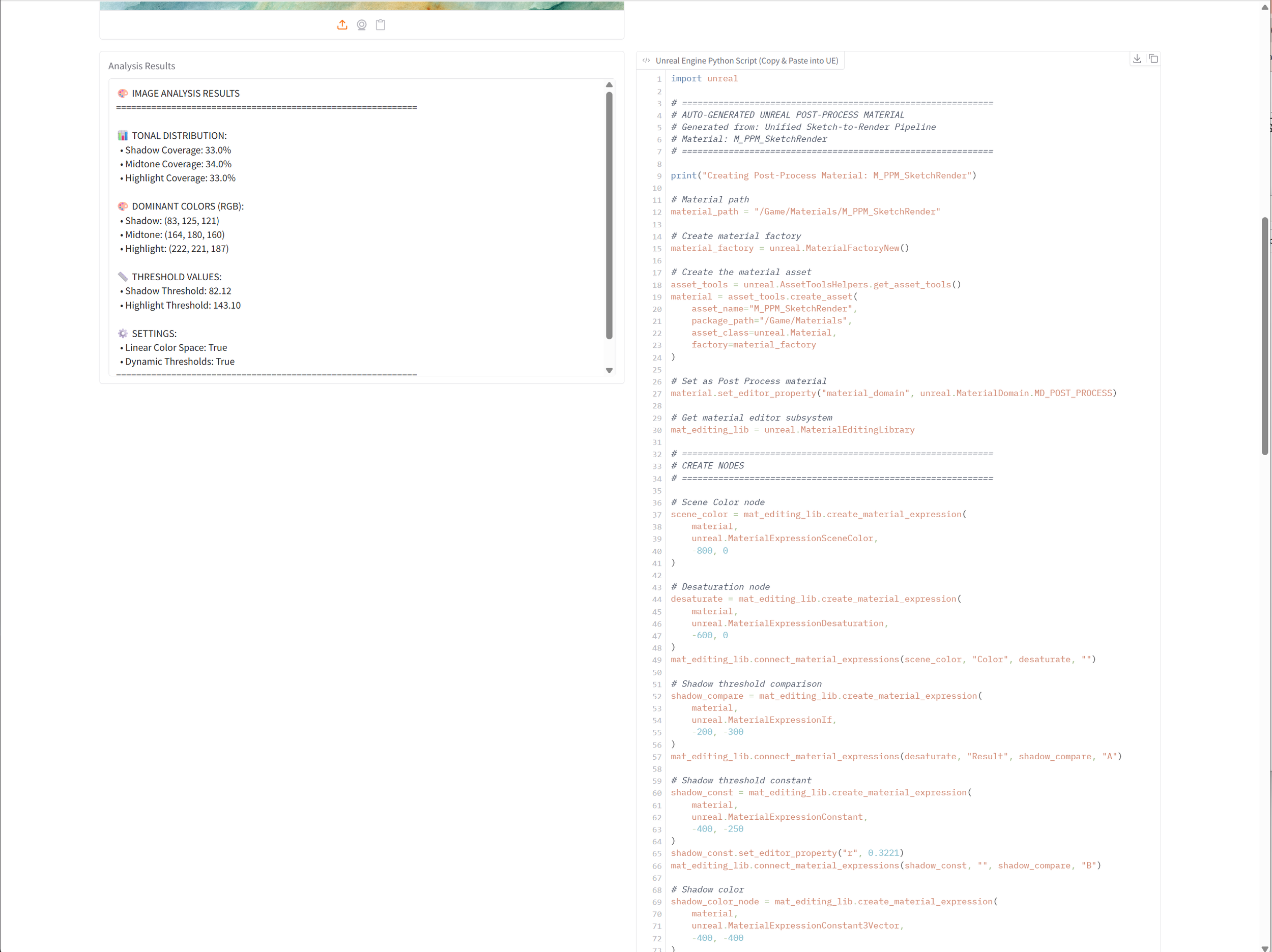

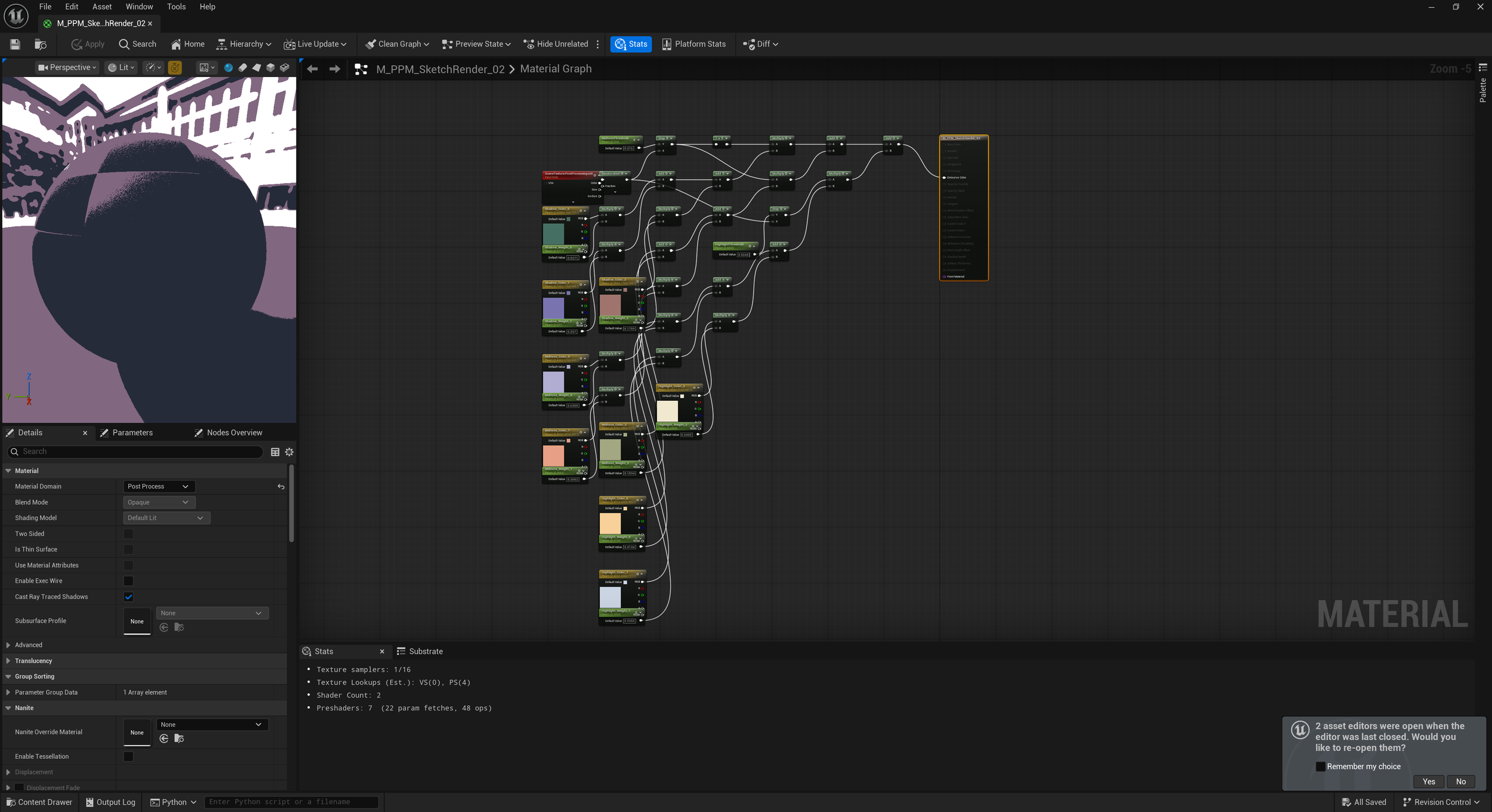

custom Python code

The custom Python script reads the selected parameters (like Bands and Values) and programmatically builds an Unreal Engine post-process material by wiring nodes in the Material Editor via the Unreal Python API. It computes luminance from the scene color, generates threshold masks based on the band count and hardness, applies palette colors per band, sums the results, and outputs the result to Emissive Color, producing the final PPM effect applied through a Material Instance and a Post Process Volume.

Generated post process material

customization

results